Pagination Oversight

Good observability doesn't just monitor systems, it surfaces bugs that would otherwise go unnoticed for years. What follows is a concrete example of that: a latent defect in an S3 listing operation, introduced at the implementation of S3 support within the codebase, that only became visible through Traefik access logs and a self-hosted Minio instance. The bug itself was trivial once found. Finding it wasn't.

Background

The codebase in question is webbukkit/dynmap, a Minecraft server plugin that renders world chunks into a browsable map. Rendering is expensive; chunks are processed incrementally and the results written out as tiles. Dynmap supports multiple storage backends for these tiles, and S3 is a natural fit: tiles are written at a known cadence, rarely mutated, and read far more often than they're written.

That said, S3 is well outside the operational scope of most Minecraft server administrators, which meant this code path had seen very little real-world use, and even less scrutiny.

Invisible Failure

Initial setup was straightforward; the plugin configured cleanly against my self-hosted MinIO instance, and the first batch of tiles rendered and uploaded without issue. The problem was what came next: Dynmap is designed to seed an initial set of tiles and then expand outward from there, progressively covering the map. That expansion never happened. Log output indicated the initial pass had completed, the plugin appeared to be running, but no further tiles were being processed. Nothing in the configuration pointed to a cause.

Chasing Geese

With the Dynmap configuration seemingly sound, attention shifted to the Minio instance itself. Checking the instance directly confirmed that tiles were in fact being uploaded. However, this also surfaced an unrelated issue with uploaded file sizes that warranted its own investigation. Minio itself, however, appeared healthy. No misconfigurations, no errors, nothing that explained the stall. At that point, the only remaining source of signal was Traefik. If the plugin was talking to Minio, the access logs would hopefully show something useful.

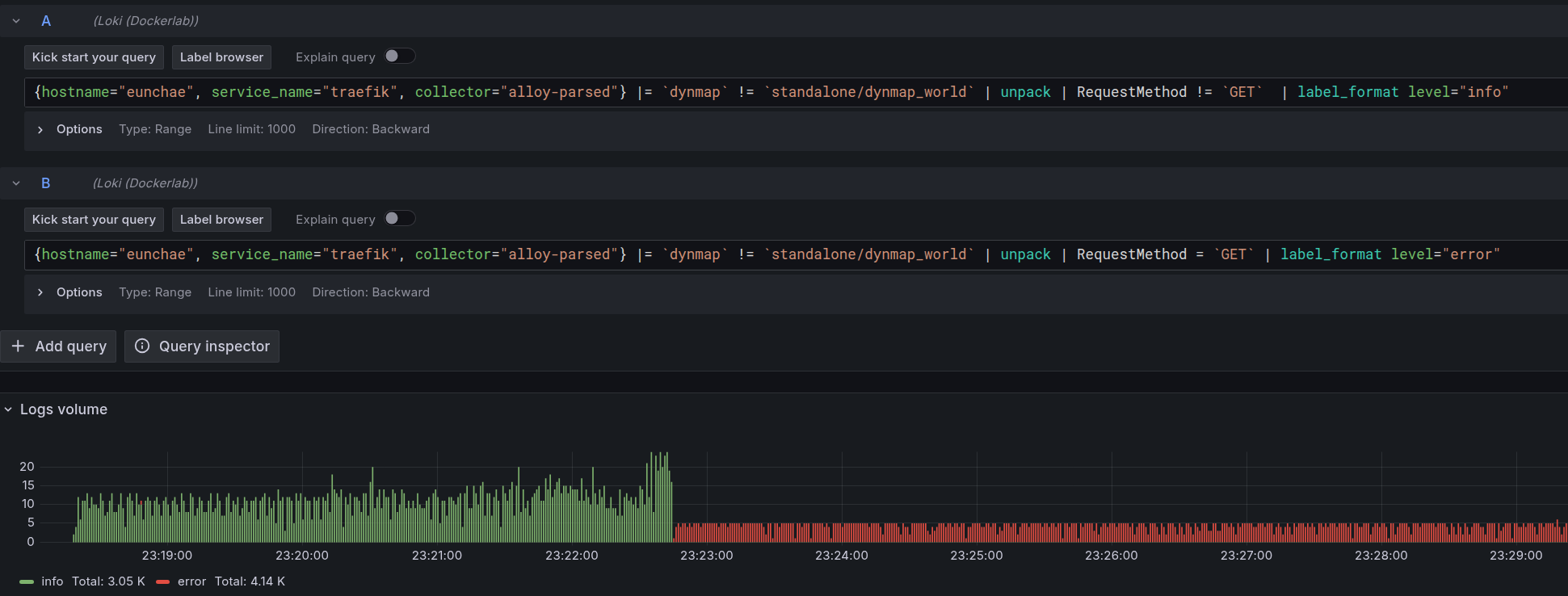

This was made significantly easier by existing infrastructure. Container logs across the stack are shipped to Grafana Loki Additionally, Traefik's access logs are parsed into discrete fields: method, path, upstream, status, and so on This meant querying specifically for traffic destined for Minio, filtered to Dynmap's requests, was trivial. The resulting view in Grafana was immediately anomalous visually, without any deeper analysis. Coloring requests by method made the story obvious: at a specific moment in time, the log stream flipped to nothing but GETs, with no end.

Root Cause

The timing of that shift was precise enough to correlate directly with the Minecraft instance logs. Cross-referencing the two, the switch to exclusive GET traffic landed exactly at the moment rendering completed — not a coincidence, but a handoff. Something triggered at the end of the render pass was hammering S3 with GET requests and not stopping.

Narrowing to S3 API calls that ran post-render in the codebase pointed quickly to a single list operation. The offending code block was this:

while (!done) {

ListObjectsV2Response result = s3.listObjectsV2(req);

...

if (result.isTruncated()) { // If more, build continuiation request

req = ListObjectsV2Request.builder().bucketName(bucketname)

.prefix(basekey).delimiter("").maxKeys(1000).continuationToken(result.getContinuationToken()).encodingType("url").requestPayer("requester").build();

}

else { // Else, we're done

done = true;

}

}

The code is checking isTruncated() on the result (standard pagination logic) and, the intent is to fetch the next page if the result is truncated.

The problem was in how it requested that next page: it was passing the token from .getContinuationToken() rather than .getNextContinuationToken().

The former returns the token that was used for the current request.

The latter returns the token for the next one.

The end result was that the list was consistently truncated, the continuation token never advanced, and the loop ran indefinitely.

A custom build with the corrected token call confirmed it. Rendering proceeded past the initial pass and continued expanding outward as expected. An issue was filed, a PR submitted, and it was accepted.

Closing Thoughts

This class of bug is straightforwardly preventable with a unit test. Mocking the S3 list response with a truncated result and a mismatched continuation token would have caught it immediately. That's a low bar that's easy to miss when a code path sees almost no real-world exercise.

The cost dimension is also worth noting. Against real S3, an infinite list loop isn't just a correctness problem, it's a billing problem. Minio absorbed this silently, which was genuinely fortunate. Had this been wired up against AWS from the start, the feedback would have come in the form of an invoice rather than an anomalous Grafana panel.

That said, it's worth keeping perspective. This is a Minecraft plugin, the S3 backend is a niche configuration within that already niche context, and the bug survived undetected for three years for exactly that reason. Expecting thorough coverage of an edge case that almost nobody exercises isn't entirely reasonable. What matters is that when someone finally did exercise it, the infrastructure existed to find out why it was broken.